Private. Local.

Intelligence.

Advanced LLMs running directly on your device, now with Gemma 4 support, smarter retrieval, and stronger imports.

No cloud. No latency. Total privacy.

Your data.

Your device.

Your intelligence.

Noema bridges the gap between limited hardware and high-quality knowledge. By connecting local models with curated on-device datasets, it delivers absolute privacy without sacrificing capability.

Retrieval-

Augmented

Generation.

Advanced AI with your own data. Fast, private, and completely offline.

In-App Library

Browse and import complete resources directly inside the app.

Bring Your Data

Import personal documents in TXT, PDF, or EPUB formats for complete offline access.

Local Indexing

Convert datasets into efficient representations stored in a compact on-device database.

Context Injection

Retrieve relevant dataset chunks and inject them into prompts for accurate responses.

Always Available

Once a dataset is imported, it works anytime, anywhere. No connectivity needed. Your knowledge travels with you.

Five local backends.

Choose the on-device runtime that fits your hardware perfectly, dynamically switching between high performance and battery conservation.

GGUF

The most portable and tunable local backend in Noema. Broad compatibility, flexible quantization, and the deepest performance controls across devices.

See it in action.

Experience the intuitive interface built with deep care and attention to detail. Every pixel matters.

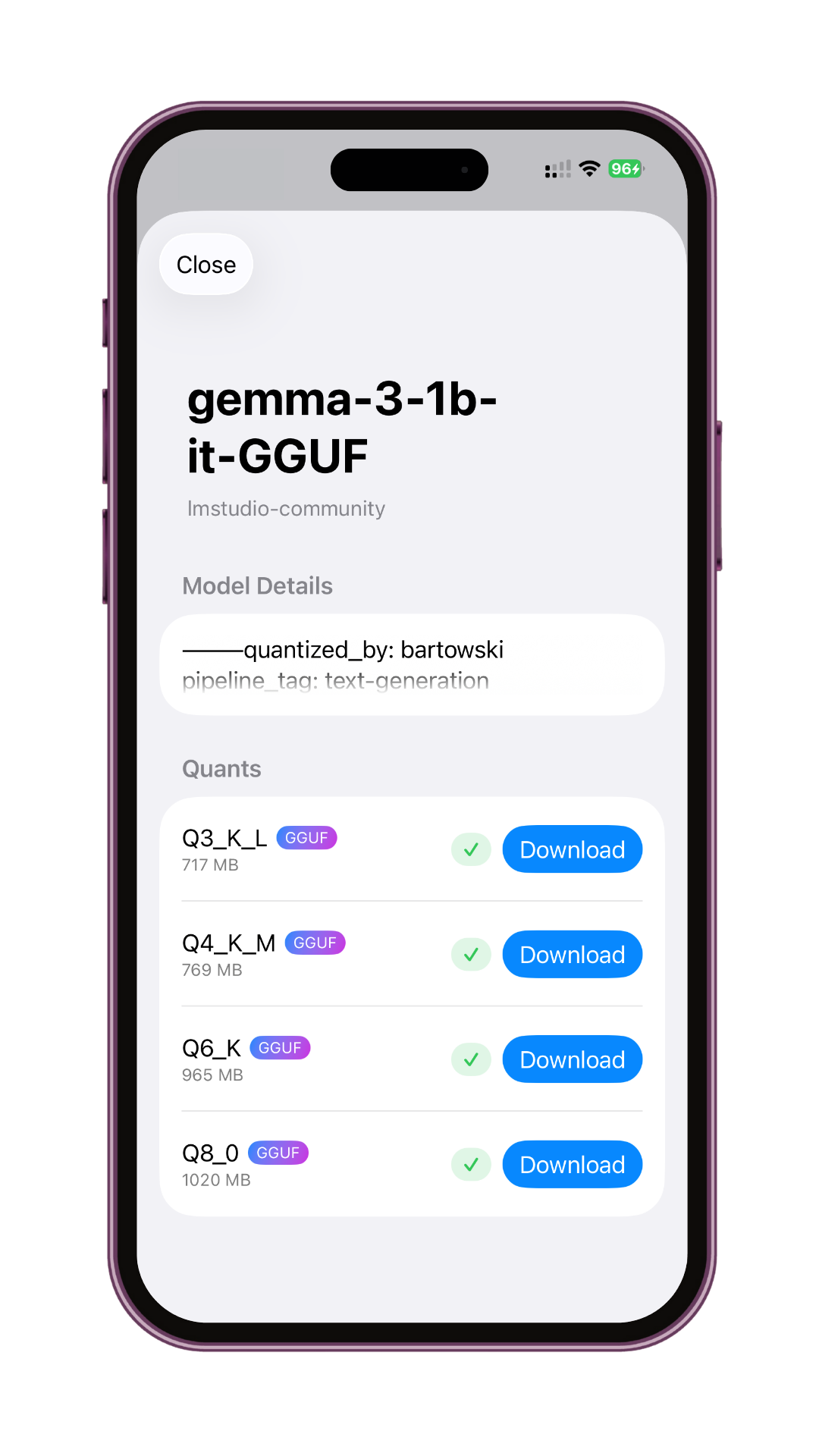

Effortless Model Management

Browse, download, and organize your AI models with our intuitive interface. Integrated Hugging Face search makes discovering new models a breeze.

- One-click model installation

- Automatic dependency management

- Real-time download progress

Intelligent Conversations

Experience seamless AI conversations with advanced context understanding and tool calling capabilities.

- Advanced context awareness

- Built-in tool integration

- Dataset RAG interaction

Deep Customization

Fine-tune your AI models with advanced temperature settings and personalization options for absolute control.

- Custom model parameters

- Performance optimizations

- Complete local processing

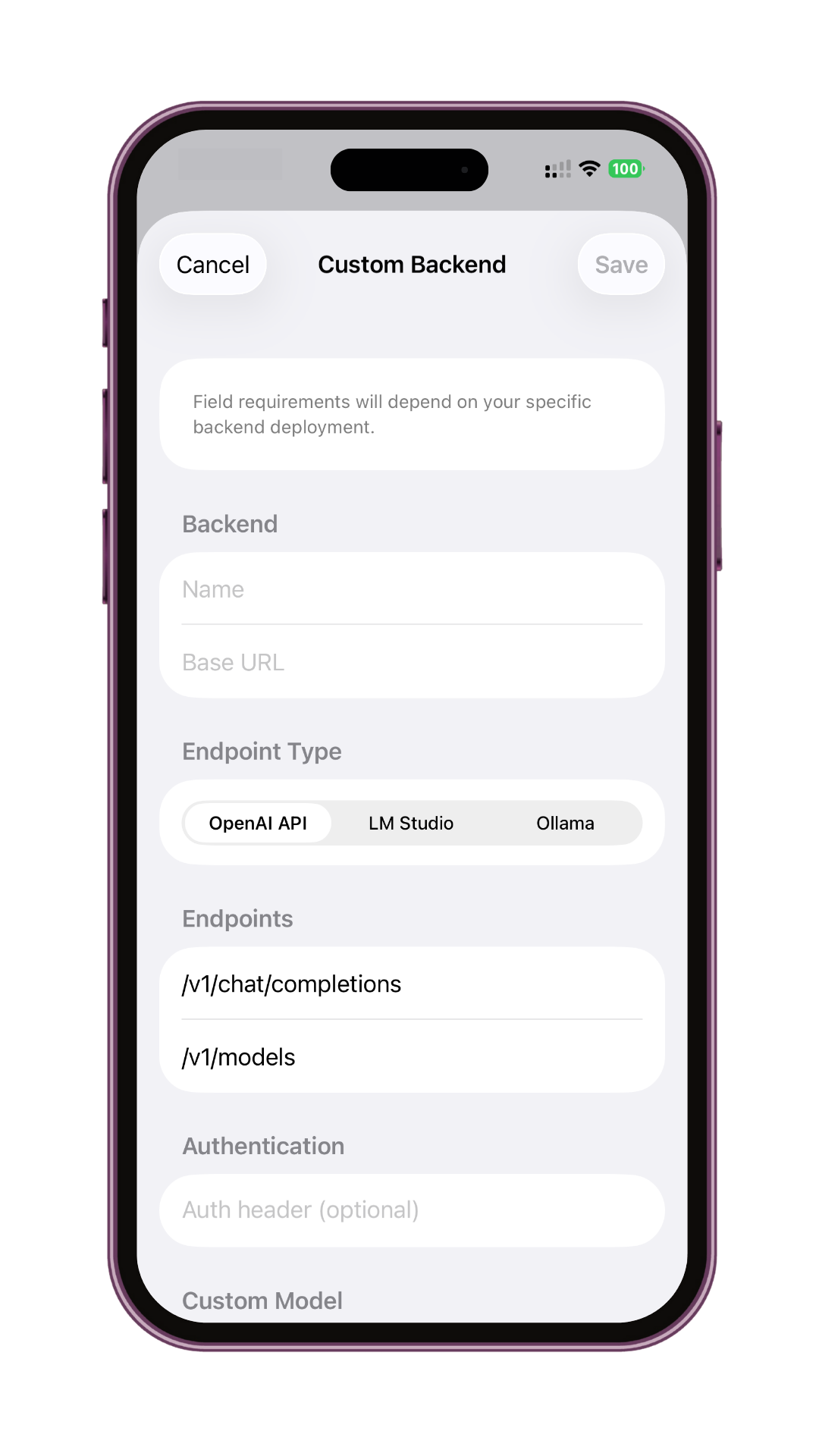

Bring remote inference into your workspace.

Register HTTP-accessible backends to seamlessly chat with the models running on another rack server or cloud VM without leaving your trusted environment.

Connect from anywhere

Register remote desktops or servers and speak to them directly through Noema's unified chat.

Provider-aware profiles

Dedicated profiles for OpenAI API, LM Studio, and Ollama endpoints tailored exactly to their needs.

Built-in safety checks

Connection summaries keep you informed. Toggle Off-Grid mode instantly to pause any network traffic.

Just HTTP

Point Noema at any standards-compliant inference endpoint using custom paths, headers, and stops.

Guided setup

Choose a backend type to pre-fill paths, validate required fields, and capture authorization exactly right.

A comprehensive toolkit.

Experience the future of offline AI interaction. Noema ships with everything you need to run deeply agentic logic securely.

Model Context Protocol

Advanced tool calling protocol that enables your AI model to autonomously search and perform agentic actions, expanding beyond standard chat capabilities.

Dataset Integration

Access Open Textbook Library resources through RAG without increasing context usage or requiring model finetuning.

Personal Knowledge

Upload your own documents to query across a private knowledge base for contextually relevant answers.

Functions & MCP

Execute functions and interact with external tools seamlessly, giving your models real-world agency.

GPU Acceleration

Harness the full power of your hardware with optimized GPU support, from Apple Silicon to custom rigs.

Absolute Privacy

Your conversations and data never leave your device. Complete offline functionality guaranteed forever.

Native iOS Feel

An intuitive interface inspired by the best native chat applications, designed strictly for productivity.

One-Click Installs

Download and set up massive language models effortlessly with a streamlined, error-free installation process.

Model Discovery

Find the perfect model for your needs with intelligent search, trending lists, and provider recommendations.

Free forever.

Noema is committed to democratizing AI. The entire experience—unlimited access, remote endpoints, and every feature—is absolutely free for everyone.

Open source.

Truth thrives in the light. Our entire underlying architecture and core logic are open source, ensuring transparency and community collaboration.

View on GitHubTransform your

intelligence.

Start running local AI models unconditionally. Real privacy, real speed, directly on your personal devices.